Workbench

Live sync ready.

Head in the cloud, feet on the ground Upcoming: National Indigenous Peoples Day (Sun Jun 21) · Canada Day (Wed Jul 1)

Live sync ready.

No. 1 · HN

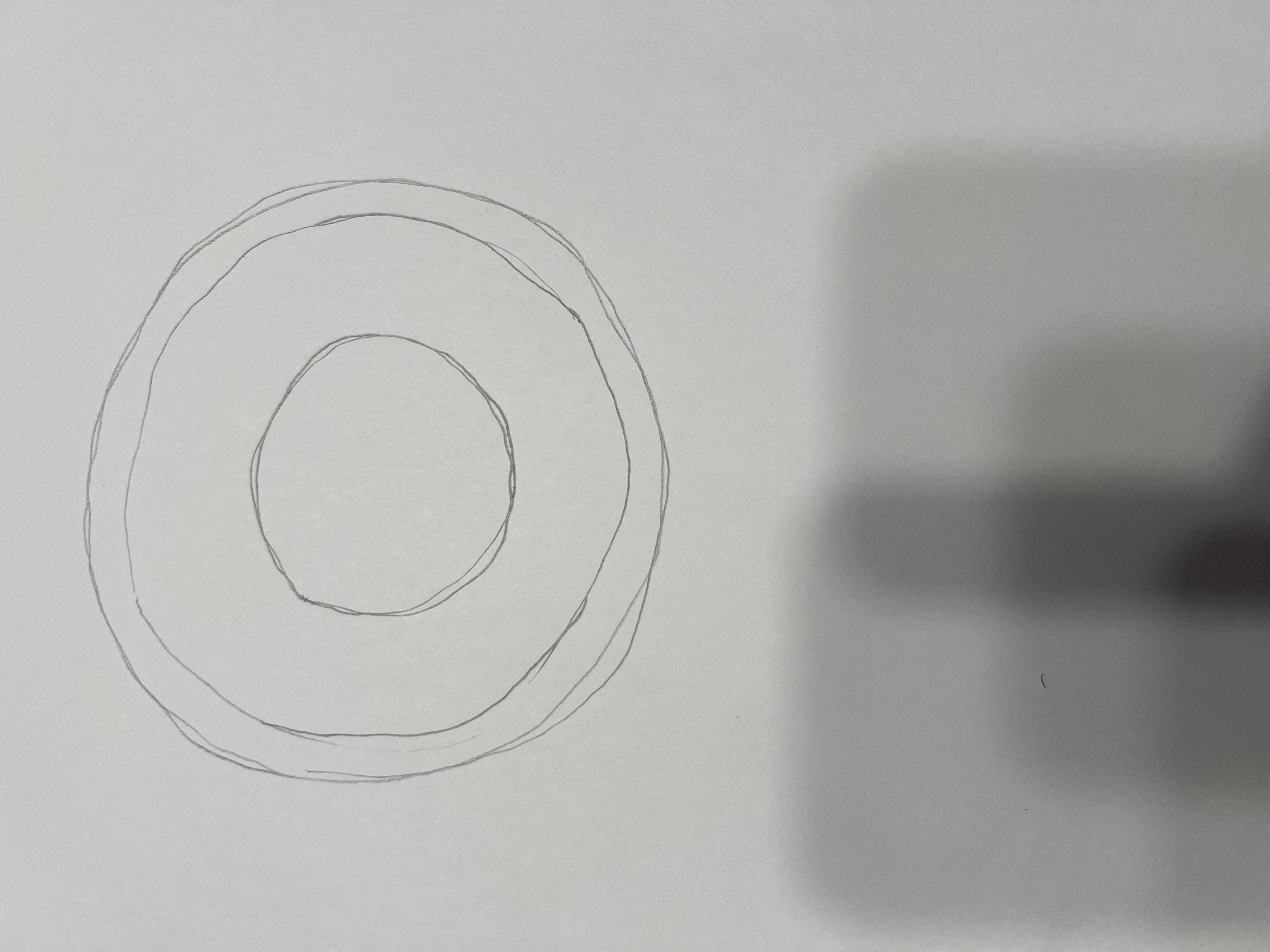

From linkThe piece argues that public-sector forms fail when teams optimize for modern-device assumptions instead of the conditions people actually use them under: low-end phones, intermittent connectivity, shared devices, translation needs, and assistive technology. Its case study rebuilds a government-style workflow as a plain, multi-step, progressively enhanced HTML experience, then shows that removing JavaScript dependency and visual clutter did more than improve taste: it materially reduced friction and roughly doubled successful use overnight.

From commentsThe HN thread mostly treated the result as a reminder that web complexity is often self-inflicted, especially in government and enterprise systems where reliability matters more than front-end fashion. Commenters compared war stories about brittle SPA forms, pointed out that accessibility and resilience gains usually help every user rather than a niche minority, and debated how much of the industry's tooling culture now hides straightforward product mistakes behind framework inevitability.

No. 2 · HN

From linkThe announcement frames PgDog as a startup building the orchestration layer around scaling Postgres, with the company using a new round of funding to keep pushing on sharding, routing, and operational simplicity rather than trying to invent a wholly new data model. The notable tone of the post is its restraint: it sells trust in a very specific pain point, arguing that a lot of real-world database scale problems are still about careful systems work, boring reliability, and making existing Postgres estates easier to run.

From commentsThe HN discussion quickly moved from congratulations into skepticism about the economics and differentiation of managed database infrastructure. Readers asked whether the company is primarily a control-plane business, how durable the moat is against hyperscaler offerings, and whether teams actually want yet another layer between application code and Postgres. Even so, the thread had a practical undertone, with experienced operators acknowledging that painful scaling and tenancy problems persist long after generic "just use Postgres" advice stops being useful.

No. 3 · HN

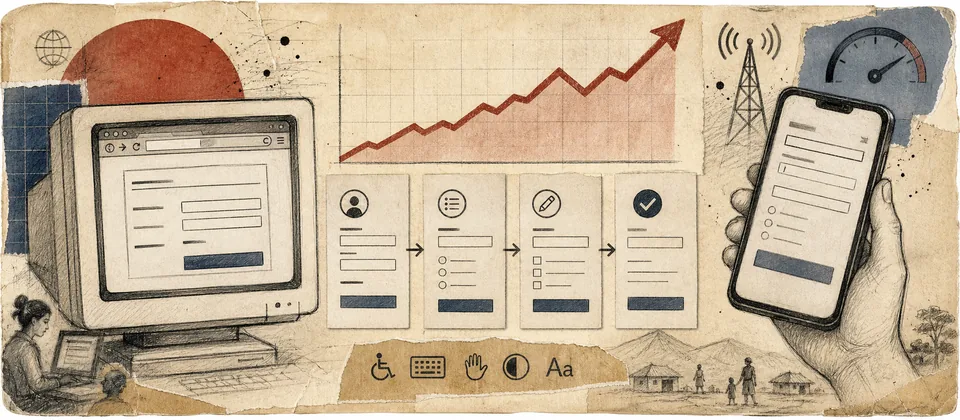

From linkThe page is essentially a lovingly maintained field notebook of Japan's rail network, blending station listings, route context, and map-heavy presentation into something that feels halfway between travel tool and personal archive. What makes it compelling is the density of care: instead of flattening the system into a generic transit app view, it preserves the texture of lines, places, and station relationships, which makes the underlying infrastructure feel legible as a lived geographic system rather than just an origin-destination query.

From commentsThe HN thread read like a meeting point between rail nerds, map people, and web tinkerers. Commenters compared the project to other transit visualizations, asked about data freshness and route provenance, and traded notes on how unusually rich Japanese station geography becomes when you stop compressing it into a product UI. The general reaction was that the page succeeds because it does not over-productize itself: it feels personal, deep, and useful precisely because its author cared enough to keep the details visible.

No. 4 · HN

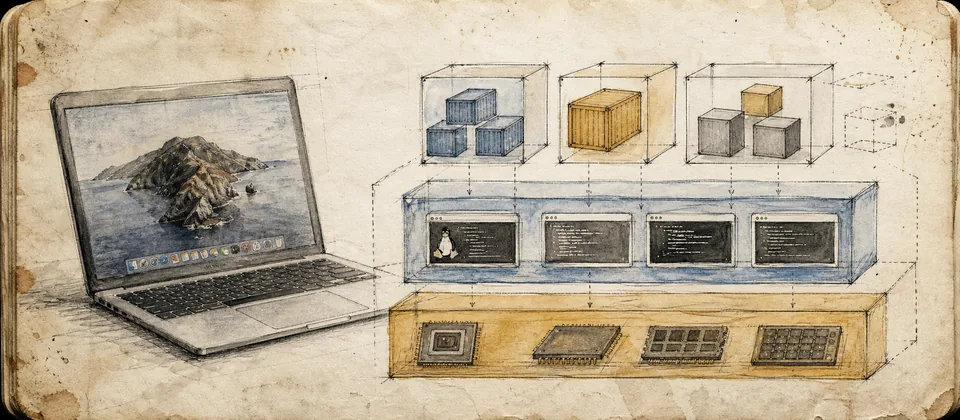

From linkThe Apple documentation describes a "container machine" as a managed Linux VM artifact that can be created from a root filesystem or restored from a saved image, giving developers a more explicit substrate for running containers on macOS. Rather than pretending containers are native on the host, the design leans into the VM boundary and exposes lifecycle commands around build, boot, import, and export, which makes the system feel closer to a cleanly documented runtime primitive than another magic desktop wrapper.

From commentsThe HN thread focused less on hype than on taxonomy and tradeoffs: readers debated whether this is meaningfully different from existing Docker, Colima, Lima, or OrbStack arrangements, and whether Apple is simply productizing a boundary developers should have understood all along. A lot of the discussion centered on security, performance, and operational clarity, with commenters broadly appreciating that the docs make the Linux-on-Mac reality more visible even if the practical day-to-day value still depends on tooling integration above it.

No. 5 · HN

From linkThe essay argues that modern laptop keyboards too often treat the function row as a media-control strip first and a precision tool second, forcing developers and other heavy shortcut users to negotiate a modifier key for actions they hit constantly. The piece is small but sharp because it reframes the annoyance as interface prioritization: the hardware is making an opinionated bet about what should be easiest to reach, and for many technical workflows that bet still feels backwards.

From commentsThe HN conversation split along familiar ergonomic lines, with some people insisting the problem disappears once you remap keys or use an external keyboard and others arguing that needing a workaround is exactly the indictment. Commenters compared Apple, ThinkPad, and custom keyboard habits, drifted into broader complaints about laptop input compromises, and largely agreed that function-row design is one of those small UX decisions that becomes disproportionately irritating when it interrupts muscle memory hundreds of times a day.

No. 6 · HN

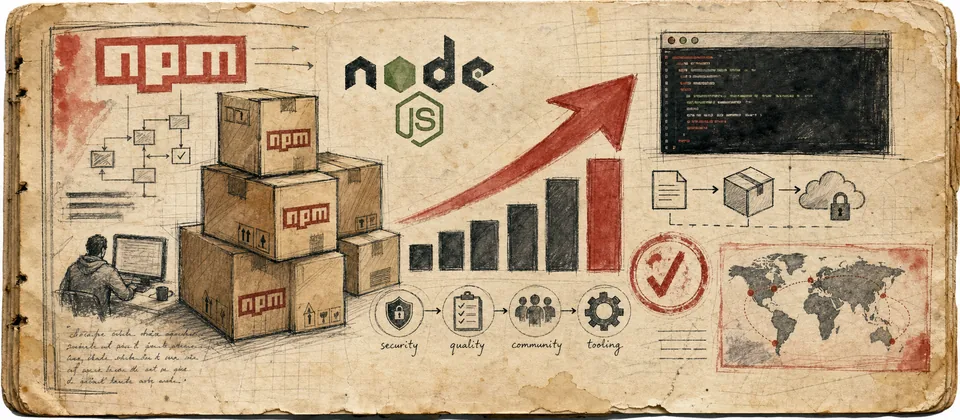

From linkThe GitHub changelog for npm v12 is a concentrated list of ecosystem friction points being turned into explicit policy: support for Node 18 and 20 is ending, Corepack is no longer bundled, `npx` behavior is changing, token and OTP expectations are tightening, and lifecycle scripts are moving behind an `allowScripts` gate. Taken together, the release reads less like a cosmetic major bump and more like a continuing attempt to reduce ambient trust in package execution while forcing maintainers and CI systems to be more deliberate about their toolchain assumptions.

From commentsThe HN thread was pragmatic but wary, with commenters balancing agreement on supply-chain hardening against dread about another round of breakage in builds, onboarding docs, and long-neglected packages. People debated whether the stricter defaults are overdue, how much pain the Node-version floor will create for slower-moving orgs, and whether npm keeps asking the ecosystem to absorb coordination costs that its own historical design decisions created. The consensus was that the direction makes sense, but the migration tax will land unevenly and loudly.